(动手学习深度学习)第7章 深度卷积神经网络---AlexNet

AlexNet网络详解、代码实现

·

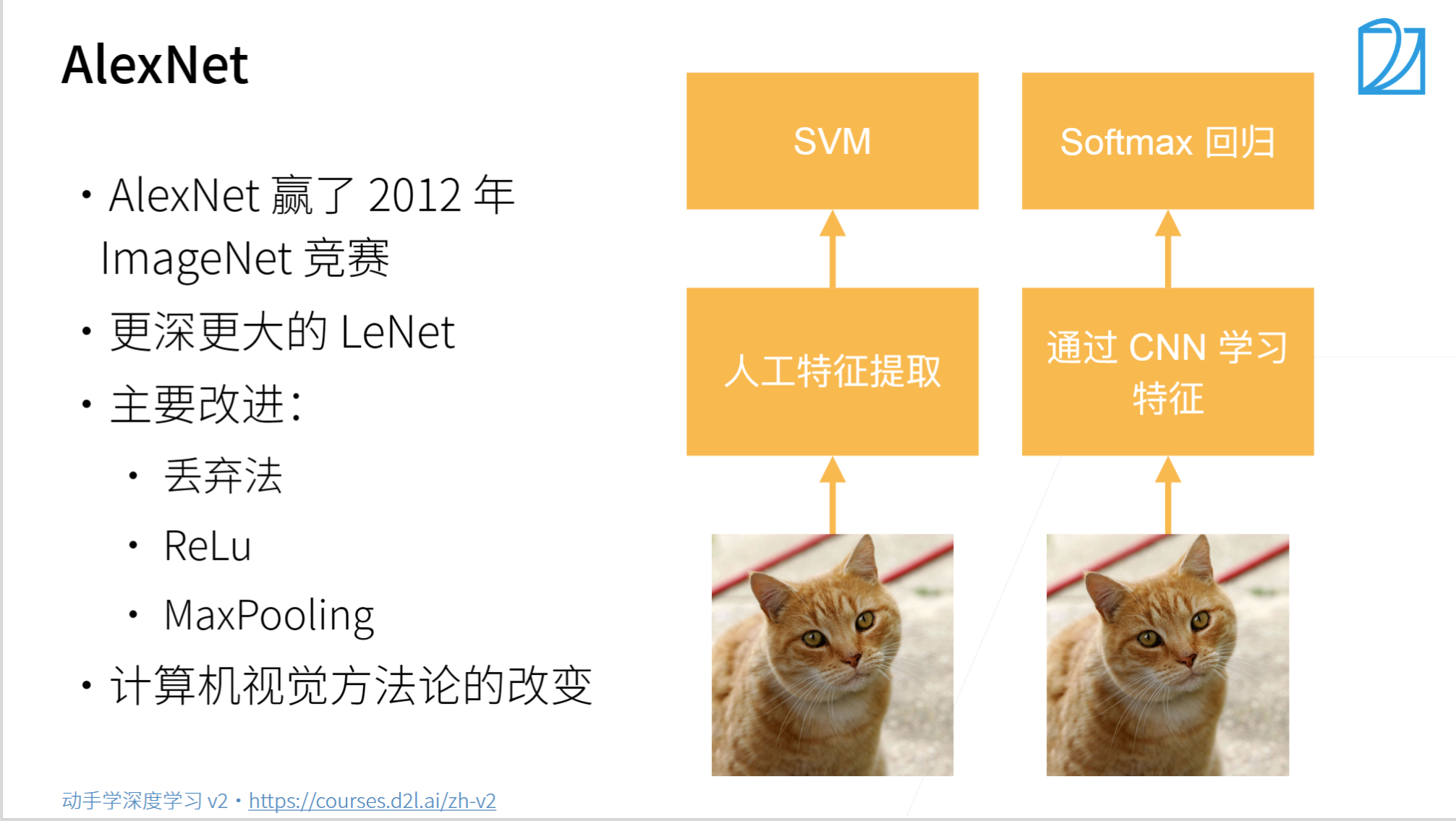

AlexNet

总结:

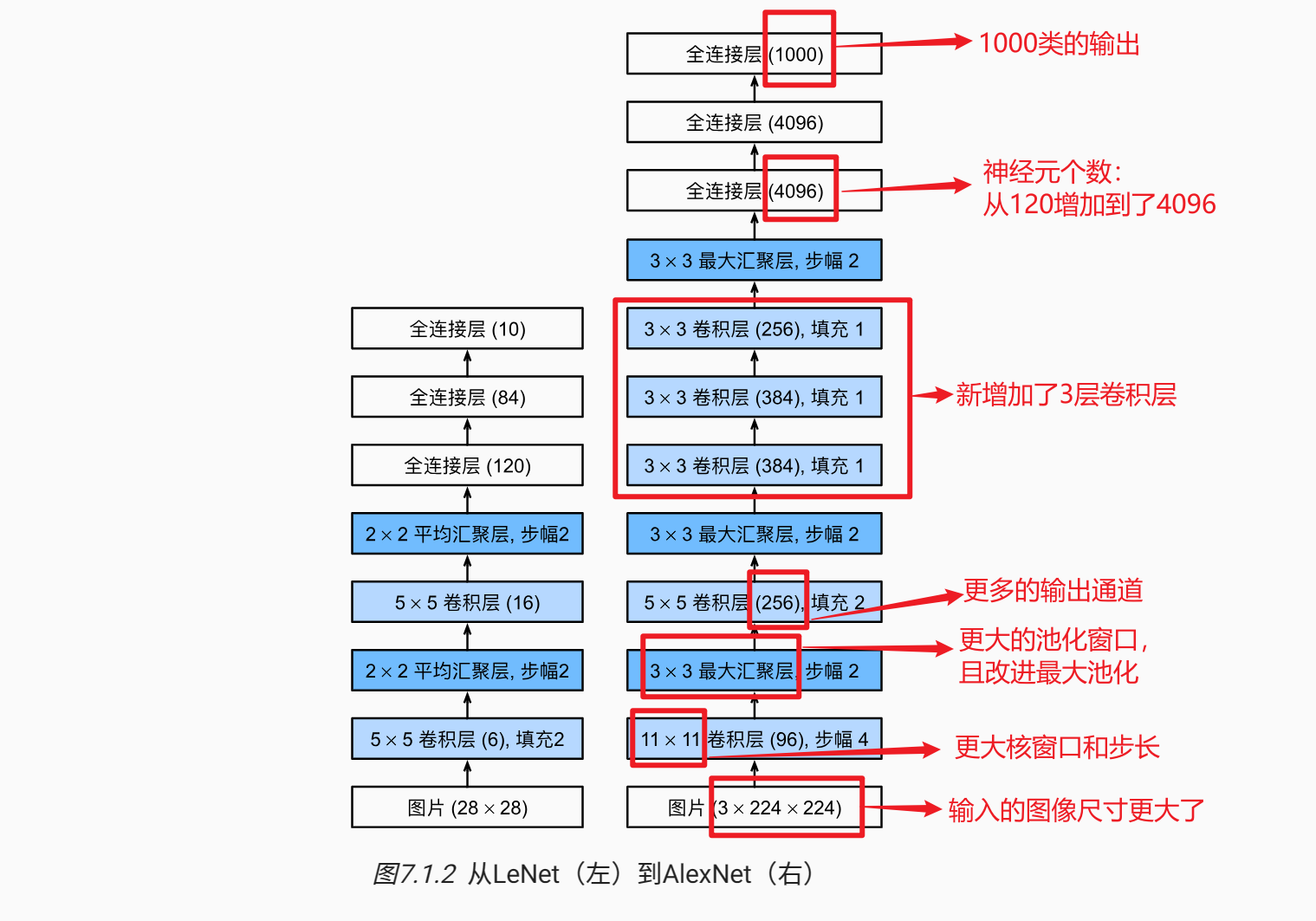

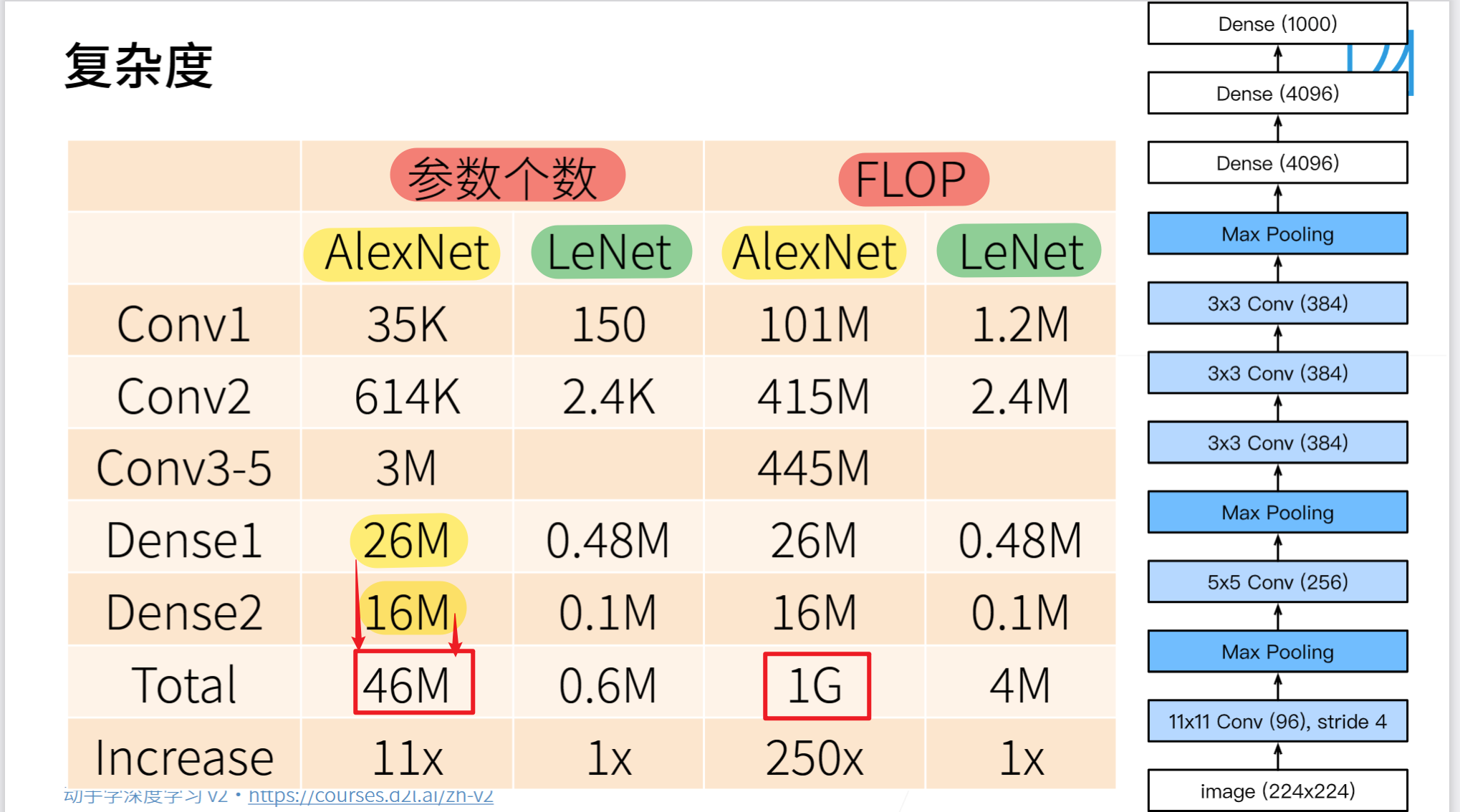

- AlexNet是更大更深的LeNet,10×参数个数,260×计算复杂度

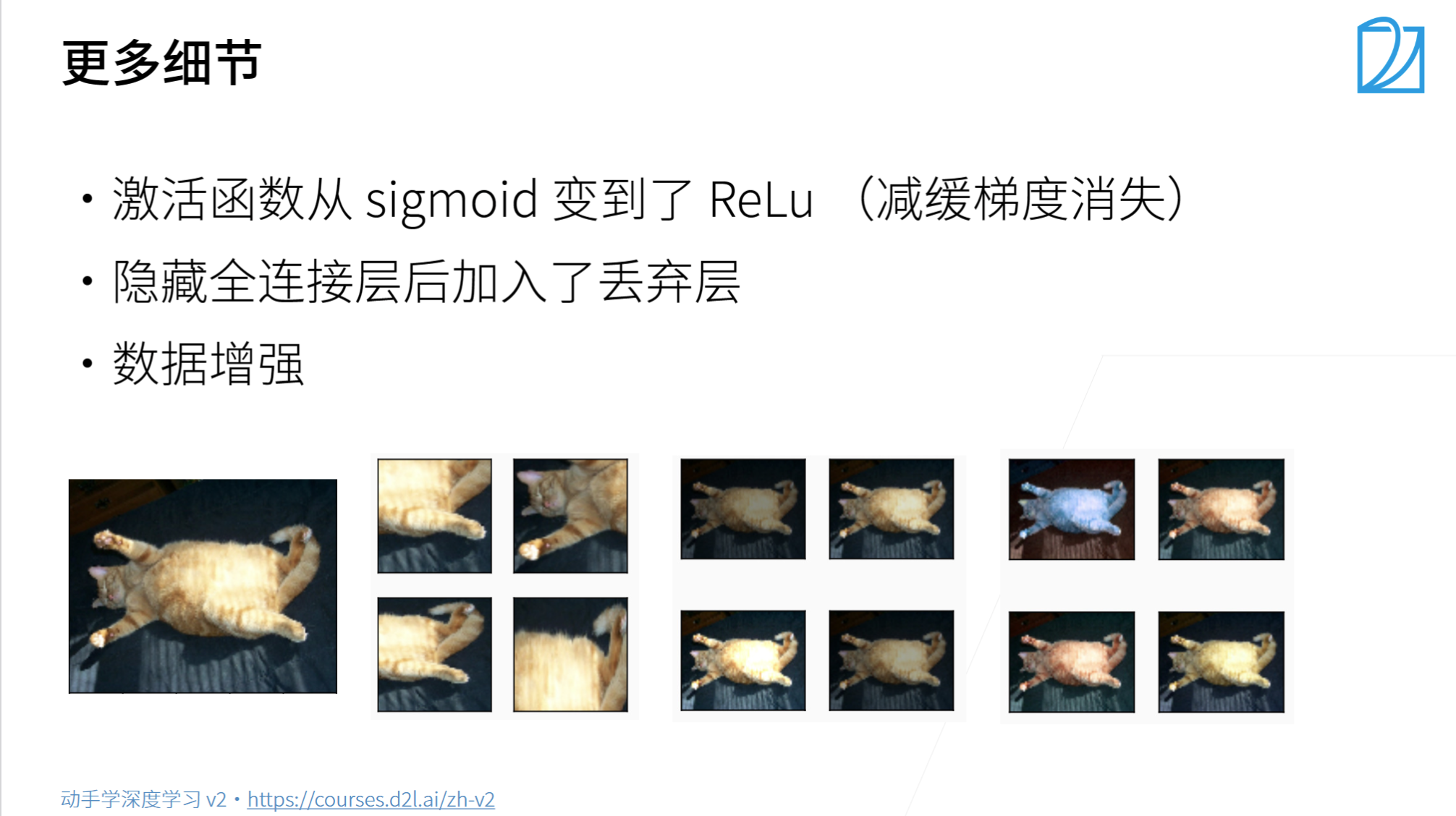

- 新加入了丢弃法、ReLU、最大池化层、数据增强

- AlexNet赢下了2012ImageNet竞赛后,标志着新的一轮神经网络热潮的开始。

AlexNet代码实现

- 导入相关库

import torch

from torch import nn

from d2l import torch as d2l

- 定义网络模型

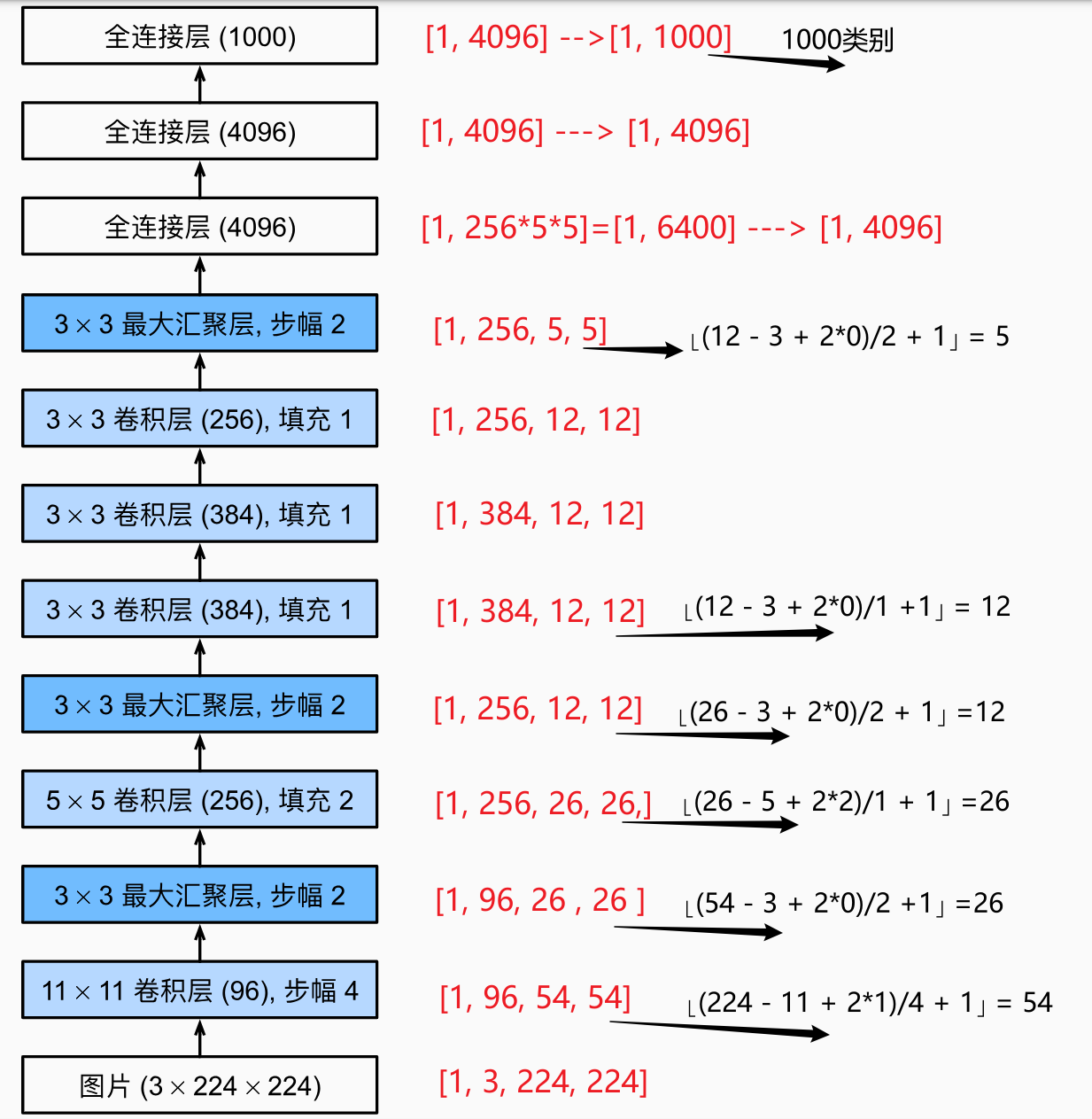

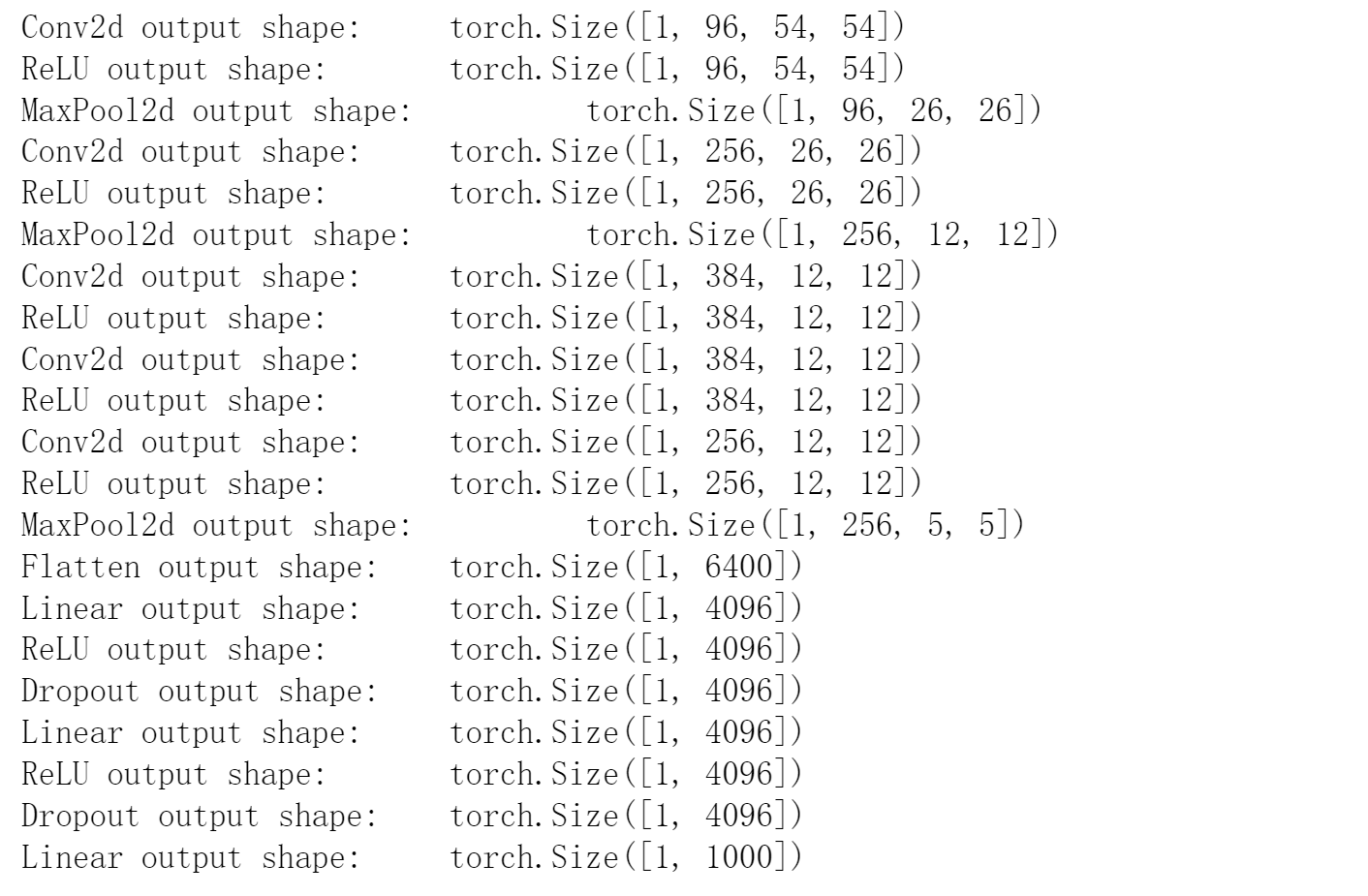

net = nn.Sequential(

nn.Conv2d(1, 96, kernel_size=11, stride=4, padding=1), nn.ReLU(), # [1, 96, 54, 54]

nn.MaxPool2d(kernel_size=3, stride=2), # [1, 96, 26, 26]

nn.Conv2d(96, 256, kernel_size=5, padding=2), nn.ReLU(), # [1, 256, 26, 26]

nn.MaxPool2d(kernel_size=3, stride=2), # [1, 256, 12, 12]

nn.Conv2d(256, 384, kernel_size=3, padding=1), nn.ReLU(), # [1, 384, 12, 12]

nn.Conv2d(384, 384, kernel_size=3, padding=1), nn.ReLU(), # [1, 384, 12, 12]

nn.Conv2d(384, 256, kernel_size=3, padding=1), nn.ReLU(), # [1, 256, 12, 12]

nn.MaxPool2d(kernel_size=3, stride=2), # [1, 256, 5, 5]

nn.Flatten(), # [1, 256, 5, 5] --> [1, 256*5*5]= [1, 6400]

nn.Linear(6400, 4096), nn.ReLU(), # [1, 6400] --> [1, 4096]

nn.Dropout(p=0.5),

nn.Linear(4096, 4096), nn.ReLU(), # [1, 4096] --> [1, 4096]

nn.Dropout(p=0.5),

nn.Linear(4096, 1000) # [1, 4096] --> [1, 4096]

)

X = torch.randn(1, 1, 224, 224)

for layer in net:

X =layer(X)

print(layer.__class__.__name__, 'output shape: \t', X.shape)

- 加载数据集

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=224)

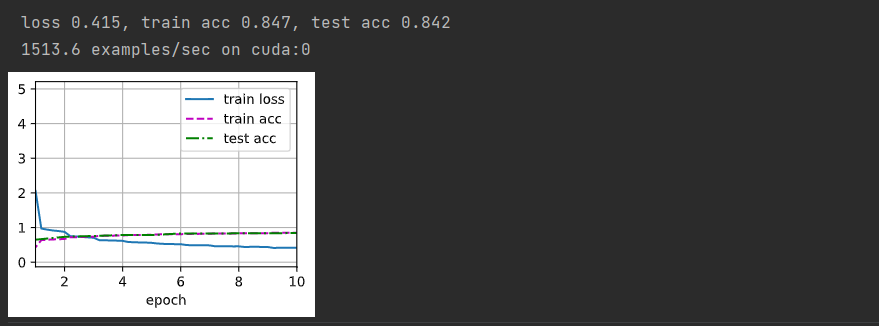

- 训练模型

lr, num_epochs = 0.01, 20

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

更多推荐

已为社区贡献9条内容

已为社区贡献9条内容

所有评论(0)