吴恩达深度学习学习笔记——C5W3——序列模型和注意力机制——作业1——神经网络机器翻译

这里主要梳理一下作业的主要内容和思路,完整作业文件可参考:https://github.com/pandenghuang/Andrew-Ng-Deep-Learning-notes/tree/master/assignments/C5W3/Assignment作业完整截图,参考本文结尾:作业完整截图。Neural Machine Translation(神经网络机器翻译)Welcome to yo

这里主要梳理一下作业的主要内容和思路,完整作业文件可参考:

作业完整截图,参考本文结尾:作业完整截图。

Neural Machine Translation(神经网络机器翻译)

Welcome to your first programming assignment for this week!

You will build a Neural Machine Translation (NMT) model to translate human readable dates ("25th of June, 2009") into machine readable dates ("2009-06-25"). You will do this using an attention model, one of the most sophisticated sequence to sequence models.

This notebook was produced together with NVIDIA's Deep Learning Institute.

...

1 - Translating human readable dates into machine readable dates(将人类可读的日期翻译为机器可读的日期)

The model you will build here could be used to translate from one language to another, such as translating from English to Hindi. However, language translation requires massive datasets and usually takes days of training on GPUs. To give you a place to experiment with these models even without using massive datasets, we will instead use a simpler "date translation" task.

The network will input a date written in a variety of possible formats (e.g. "the 29th of August 1958", "03/30/1968", "24 JUNE 1987") and translate them into standardized, machine readable dates (e.g. "1958-08-29", "1968-03-30", "1987-06-24"). We will have the network learn to output dates in the common machine-readable format YYYY-MM-DD.

1.1 - Dataset(数据集)

We will train the model on a dataset of 10000 human readable dates and their equivalent, standardized, machine readable dates. Let's run the following cells to load the dataset and print some examples.

...

2 - Neural machine translation with attention(使用注意力模型完成神经网络机器翻译)

If you had to translate a book's paragraph from French to English, you would not read the whole paragraph, then close the book and translate. Even during the translation process, you would read/re-read and focus on the parts of the French paragraph corresponding to the parts of the English you are writing down.

The attention mechanism tells a Neural Machine Translation model where it should pay attention to at any step.

2.1 - Attention mechanism(注意力机制)

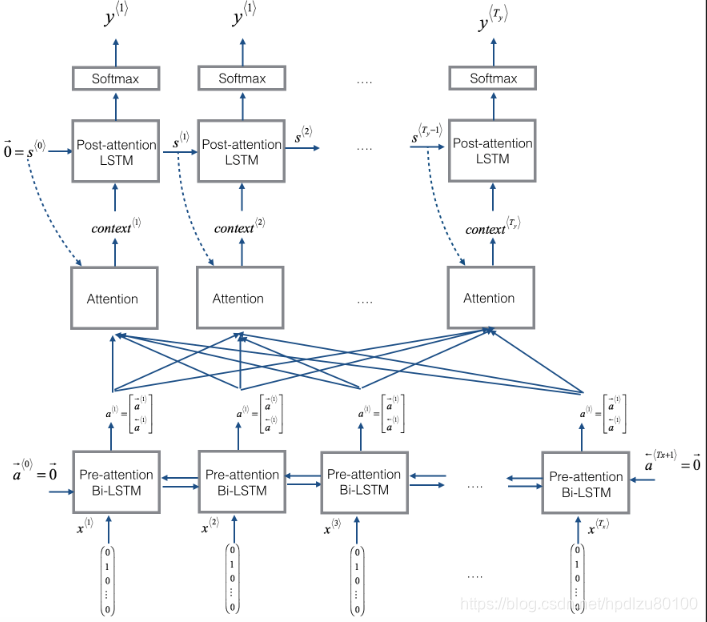

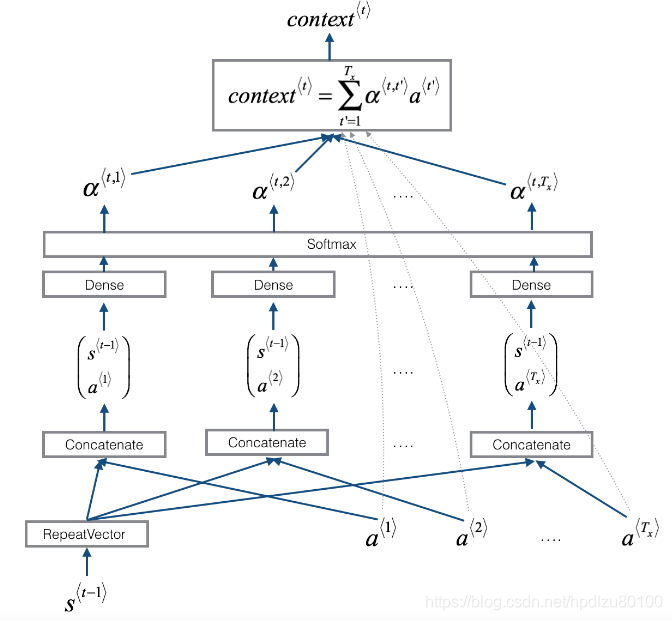

In this part, you will implement the attention mechanism presented in the lecture videos. Here is a figure to remind you how the model works. The diagram on the left shows the attention model. The diagram on the right shows what one "Attention" step does to calculate the attention variables 𝛼〈𝑡,𝑡′〉, which are used to compute the context variable 𝑐𝑜𝑛𝑡𝑒𝑥𝑡〈𝑡〉 for each timestep in the output (𝑡=1,…,𝑇𝑦).

...

3 - Visualizing Attention (Optional / Ungraded)(注意力机制的可视化)

Since the problem has a fixed output length of 10, it is also possible to carry out this task using 10 different softmax units to generate the 10 characters of the output. But one advantage of the attention model is that each part of the output (say the month) knows it needs to depend only on a small part of the input (the characters in the input giving the month). We can visualize what part of the output is looking at what part of the input.

3.1 - Getting the activations from the network(获取网络激活值)

Lets now visualize the attention values in your network. We'll propagate an example through the network, then visualize the values of 𝛼〈𝑡,𝑡′〉.

...

Congratulations!

You have come to the end of this assignment

Here's what you should remember from this notebook:

- Machine translation models can be used to map from one sequence to another. They are useful not just for translating human languages (like French->English) but also for tasks like date format translation.

- An attention mechanism allows a network to focus on the most relevant parts of the input when producing a specific part of the output.

- A network using an attention mechanism can translate from inputs of length 𝑇𝑥 to outputs of length 𝑇𝑦, where 𝑇𝑥 and 𝑇𝑦 can be different.

- You can visualize attention weights 𝛼〈𝑡,𝑡′〉 to see what the network is paying attention to while generating each output.

Congratulations on finishing this assignment! You are now able to implement an attention model and use it to learn complex mappings from one sequence to another.

作业完整截图:

更多推荐

已为社区贡献8条内容

已为社区贡献8条内容

所有评论(0)